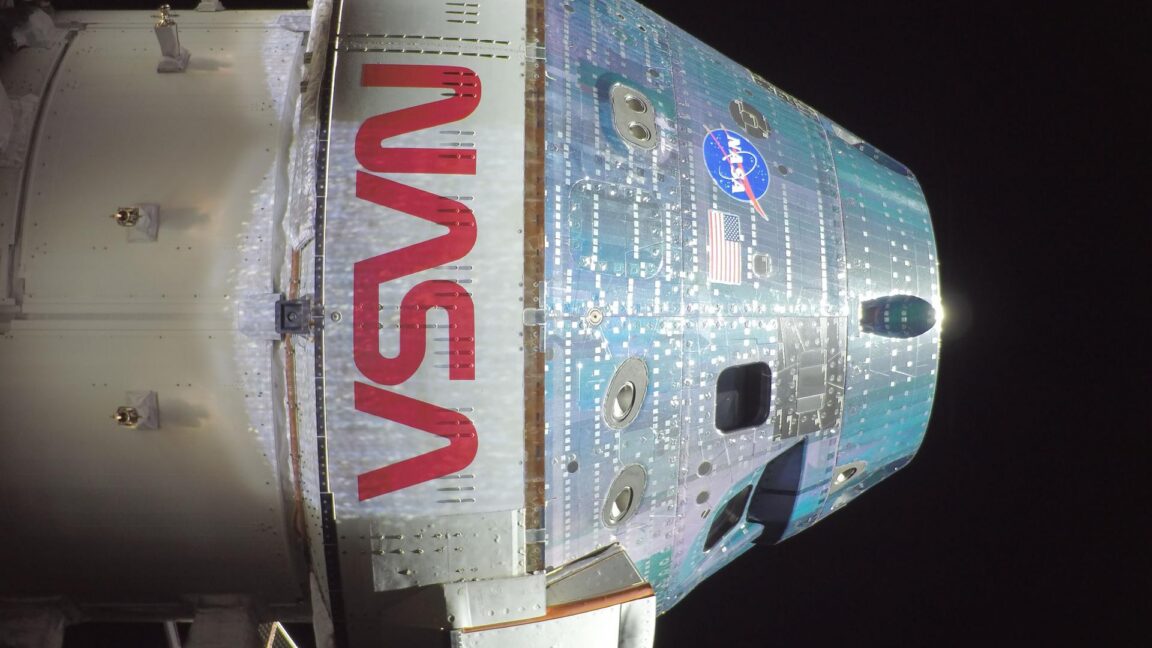

Artemis II: The Curious Reality of Spaceflight Progress

Ars Technica’s framing of Artemis II exposes a compelling truth: as missions stretch toward the Moon, the most mundane details become a focal point for engineering teams and the public alike. The piece uses a light touch—frozen urine, cabin temperature, and mission hygiene—to spotlight the rigor behind successful space exploration. While not a pure AI story, the article intersects with AI in several ways: autonomous systems, predictive maintenance, and the need for resilient, trustworthy software in crewed missions. The broader takeaway centers on how AI-enabled monitoring and decision-support tools are being tested in extreme environments where human judgment must be reinforced, not replaced, by automated systems.

From a governance and risk perspective, the article illustrates how AI plays a quiet but indispensable role in spaceflight operations. Instrumentation streams feed AI-driven anomaly detection, life-support control, and safety assurance processes that influence crew safety and mission success. The significance extends to the public perception of AI reliability in critical domains; when readers see AI-related infrastructure deliver on promises under intense scrutiny, trust compounds and investor sentiment follows. The challenge for aerospace programs and enterprise AI alike is to quantify and communicate residual risk, demonstrate end-to-end traceability of decisions, and ensure that AI systems remain auditable and explainable under mission-critical constraints. In sum, Artemis II proves that AI-enabled systems are not abstract experiments but practical, mission-armed tools that can help humanity reach new frontiers while foregrounding safety, reliability, and human-in-the-loop oversight.